10 Web Scraping Challenges in 2026 and How Data Teams Can Stay Ahead

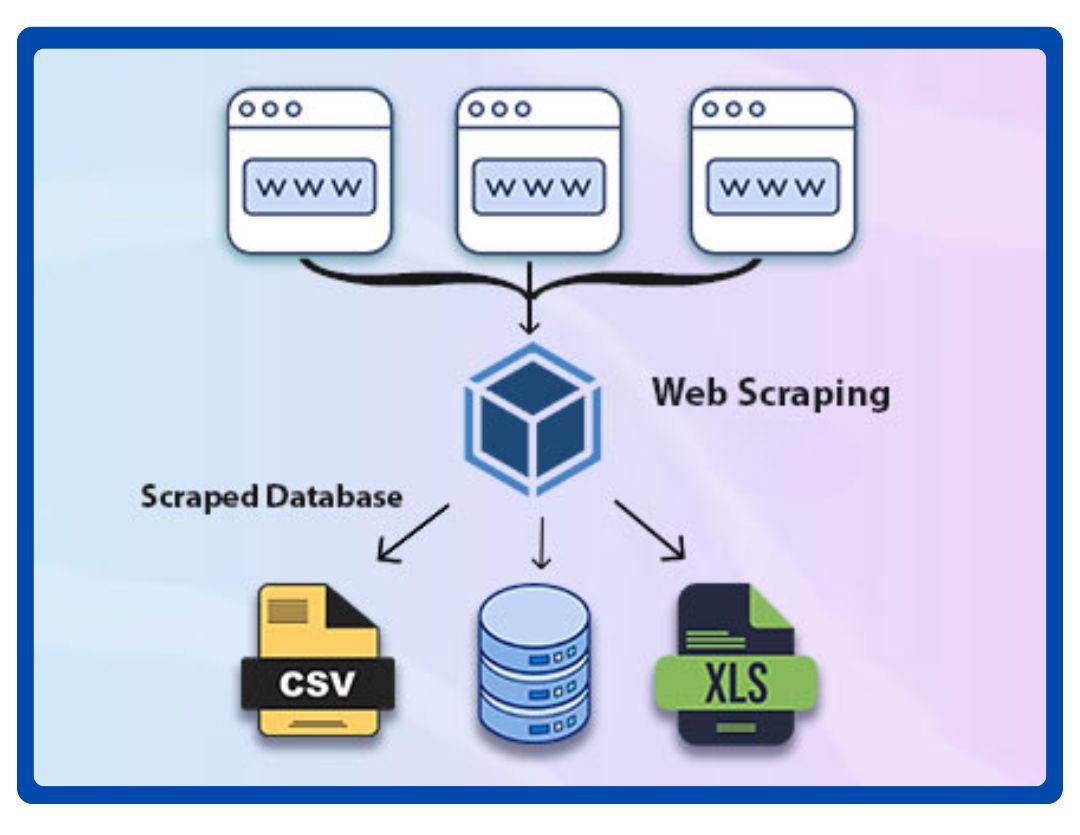

In 2026, more businesses than ever rely on website data extraction to track prices, study product trends, and understand what competitors are doing online. From ecommerce brands to market research teams, online data plays a big role in how companies plan, price, and grow. Product reviews, listings, and pricing details all help businesses decide what to sell and how to compete.

However, collecting this information is becoming harder each year. Many websites now use complex layouts, hidden content, and tighter access rules that limit how data can be collected. These growing Web Scraping Challenges make it difficult for data teams to get clean, usable information from the web.

At the same time, businesses expect higher data quality than before. They need accurate prices, complete product details, and reliable review data they can trust. As websites continue to change, data teams must find better ways to keep their data consistent, useful, and ready to support smart business decisions.

The 10 Most Important Web Scraping Challenges in 2026

Collecting data from websites sounds simple, but in 2026, it comes with many hurdles. Businesses rely on this information for pricing, product research, reviews, and competitor insights. However, websites are changing faster, and access is getting stricter. Data teams face challenges that can slow down projects or reduce accuracy. Here are the ten most important web scraping challenges data teams need to know.

Read more: Top Web Scraping Services in the USA

1. Dynamic Websites Create Data Extraction Challenges

Many modern websites use JavaScript, APIs, and interactive elements to load content. This makes collecting accurate data much harder. Standard methods may only capture the page layout and miss important details like prices, reviews, or product specifications. These types of data extraction challenges require careful planning to get the full picture.

Key issues include:

- Content that only appears after a user scrolls or clicks

- Information loaded through APIs instead of visible HTML

- Interactive elements like drop-downs or tabs hide data

- Pages that refresh or change dynamically as users navigate

How to Fix It:

To overcome these challenges, data teams should identify dynamically loaded elements and use API endpoints where possible for direct access. For content requiring interaction, simulate user actions like scrolling or clicking tabs. Regular monitoring of pages and validating data after collection ensures accuracy and completeness for reliable business insights.

2. Website Data Extraction Becomes Less Predictable

Some websites load content in layers, meaning not all information is available at the same time. Prices, product details, and reviews may appear in separate sections or only after certain actions. This makes website data extraction unpredictable and can lead to missing or incomplete datasets if not managed carefully.

Common challenges include:

- Some pages show only summary information at first, with full details hidden below

- Different pages on the same site may use slightly different layouts

- Images, descriptions, or specifications may load separately from the main content

- Pagination or “load more” sections may behave differently across categories

How to Fix It:

To manage these variations, data teams should map out all content layers and check multiple sections of each page. Plan workflows to handle different layouts and loading behaviors, and ensure that paginated or “load more” content is included. Regularly validating the extracted data helps catch gaps and maintain accuracy, ensuring that the collected information is complete and reliable for business decisions.

3. Changing Page Structures Causes Web Scraping Problems

Websites often update their design to improve user experience or refresh content. Even small changes, like moving a price tag, renaming a class, or adding new sections, can break extraction workflows. These web scraping problems make it hard for data teams to collect consistent and accurate information.

Key issues include:

- HTML elements changing location or name, causing extraction rules to fail

- Unexpected additions like banners or pop-ups interfere with data capture

- New page templates for specific categories that differ from standard layouts

- Minor updates that go unnoticed but create errors in automated processes

How to Fix It:

Data teams should monitor websites regularly to detect design changes early. Adjust extraction rules promptly to match new layouts and test workflows across multiple pages. Using flexible selectors that rely on consistent HTML elements rather than exact positions reduces the chance of errors. Continuous validation ensures collected data remains accurate and reliable for business decisions.

Contact TagX today to get structured, high-quality data that powers smarter business decisions.

4. Blocking and Access Restrictions Are Growing

Many websites now use measures to control who can access their data. IP filtering, rate limits, and location-based restrictions make it harder for data teams to collect information consistently. When too many requests come from a single IP, websites may block access temporarily or permanently.

Common challenges include:

- IP addresses are being blocked after repeated requests

- Limits on how many pages can be accessed in a set time

- Regional restrictions preventing access from certain countries

- Redirects to error or login pages that stop data collection

How to Fix It:

Data teams should plan access carefully to avoid triggering restrictions. This includes spreading requests over time, monitoring error responses, and planning alternate routes to collect data when regional restrictions apply. Regularly reviewing access patterns helps maintain uninterrupted and accurate data collection without losing valuable information.

5. Challenges in Web Scraping for Large Product Catalogs

Ecommerce websites often have thousands of products, making it difficult to track every item accurately. Extracting data at this scale can slow down processes and increase the risk of missing or duplicated information. These challenges in web scraping become more serious as the number of SKUs grows.

Key issues include:

- Large product lists that take longer to process

- Inconsistent product details across pages

- Multiple categories or variants that complicate extraction

- Updates are happening at different times across products

How to Fix It:

To handle large catalogs effectively, data teams should prioritize critical products and categories and structure workflows to process data in batches. Standardizing product details and monitoring for inconsistencies ensures completeness. Planning extraction schedules around update cycles helps capture the latest information while reducing errors, so businesses can rely on accurate data for pricing, inventory, and market analysis.

6. Data Inconsistency Across Multiple Websites

When collecting data from multiple websites, product information often doesn’t match. Prices, names, and descriptions can vary between platforms, and formats may differ as well. These inconsistencies make it harder for businesses to analyze and compare data accurately.

Common challenges include:

- Different naming conventions for the same product

- Price variations across websites

- Missing or incomplete product descriptions

- Varied formats for reviews, images, or specifications

How to Fix It:

Data teams should standardize and clean information after collection. Matching similar products across platforms, aligning naming conventions, and formatting prices and specifications consistently ensures data is accurate and comparable. Regular validation helps prevent errors and allows businesses to make informed decisions based on reliable, unified datasets.

7. Delayed Updates Affect Market Intelligence

Many websites update their content at different times, which can lead to delays in collecting accurate information. Slow refresh cycles mean that prices, product availability, and reviews may be outdated by the time they are extracted. This makes website data extraction less reliable for businesses that rely on timely insights.

Common challenges include:

- Price changes are not reflected immediately across pages

- New product launches appear late in the extracted data

- Reviews or ratings are updated slowly, affecting sentiment analysis

- Delays in competitor data make market comparisons inaccurate

How to Fix It:

Data teams should align extraction schedules with website update cycles to capture the most current information. Prioritizing high-value pages and monitoring key updates helps minimize gaps. Implementing validation checks ensures data is fresh and reliable, supporting informed decisions on pricing, inventory, and market strategy.

8. Data Quality Issues Reduce Business Value

Even after collecting data, issues like missing fields, duplicates, or broken records can make it hard to use effectively. These problems reduce the value of the data and can lead to inaccurate business insights. Handling these data extraction challenges properly is essential to maintain reliable analytics.

Common issues include:

- Missing product details such as price, description, or SKU

- Duplicate entries that skew reports and trends

- Broken links or incomplete records affecting downstream analysis

- Inconsistent formatting across datasets

How to Fix It:

Data teams should regularly clean and validate datasets to ensure accuracy and completeness. Removing duplicates, filling missing fields when possible, and standardizing formats keeps the data reliable. High-quality data allows businesses to make smarter decisions, avoid costly mistakes, and maximize the value of insights collected across multiple websites.

9. Compliance and Website Policies Create Risk

Collecting data from websites is not just a technical challenge. It also comes with legal and regulatory considerations. Many sites have terms of service that restrict how data can be used. Regional laws and platform-specific rules can also limit access or impose penalties for misuse.

Common challenges include:

- Legal restrictions on copying or storing website content

- Regional data privacy laws that vary by country

- Platform-specific policies that limit what data can be collected

- Risk of penalties or account suspension for non-compliance

How to Fix It:

Data teams should stay informed about legal and policy requirements before collecting data. Reviewing website terms, adhering to regional regulations, and following platform-specific rules ensures collection is safe, ethical, and compliant. Planning extraction within these boundaries reduces risk and maintains reliable, long-term access to valuable data.

10. Scaling Web Scraping Across Markets

Expanding data collection to multiple countries or platforms adds a new layer of complexity. Each website may have different layouts, languages, and content structures, making it harder to maintain consistency and accuracy.

Common challenges include:

- Different page designs and structures across regions

- Multiple languages require proper handling and translation

- Varying product categories and formats on different platforms

- Increased risk of missing or inconsistent data when scaling

How to Fix It:

Data teams should plan workflows for regional differences and monitor each market carefully. Standardizing formats, translating content where needed, and validating collected data ensures consistency across countries and platforms. Proper planning and ongoing checks help businesses extract reliable insights from multiple regions without sacrificing accuracy or efficiency.

How to Overcome Web Scraping Challenges with the Right Data Strategy

Collecting accurate and reliable data from websites is harder than ever. Businesses need a clear approach to stay ahead of common issues like dynamic pages, inconsistent data, and access restrictions. Knowing how to overcome web scraping challenges is key to turning raw website data into actionable insights.

A strong data strategy starts with prioritizing the most important information for business decisions. Teams should monitor websites regularly to catch changes in structure or content and standardize data formats to reduce inconsistencies across sources. Planning collection schedules to account for delayed updates and ensuring compliance with legal and website policies helps maintain accuracy and reliability.

By implementing these practices, businesses can reduce errors, improve data quality, and make smarter decisions. A proactive approach ensures that teams stay ahead even as websites evolve and become more complex.

Read also: 2026 Buyer’s Guide to Choosing the Right Web Scraping Services

How TagX Helps Businesses Stay Ahead of Web Scraping Challenges

Web scraping challenges can slow down data collection and affect business decisions. TagX helps businesses overcome these issues by providing reliable, structured, and actionable data services.

Structured Ecommerce Data

TagX delivers clean and organized ecommerce data that businesses can use to track products, monitor pricing, and analyze competitor trends. Having structured data makes it easier to generate insights and make timely decisions.

Comprehensive Data Collection Services

TagX handles the complex task of gathering data from websites, ensuring that teams have consistent and accurate information without worrying about access issues, layout changes, or missing content.

APIs for Product, Pricing, Job, and Market Intelligence

With TagX APIs, businesses can access large volumes of information efficiently. Whether it’s product details, pricing updates, job listings, or market trends, TagX provides reliable endpoints for easy integration into business workflows.

Data Cleaning and Processing

Collected data is only valuable if it is accurate and ready to use. TagX ensures that information is cleaned, validated, and processed so businesses can focus on analysis and decision-making instead of correcting errors.

By leveraging TagX services, businesses can tackle common web scraping challenges effectively. Teams can rely on accurate, complete, and ready-to-use data to stay competitive in a fast-changing online environment.

Conclusion

Web scraping challenges are only expected to grow in 2026 as websites become more complex and stricter about access. Businesses that rely on outdated or unstable scraping workflows risk missing important insights and making decisions based on incomplete information.

To stay competitive, companies need reliable and structured data. Proper website data extraction ensures that product details, pricing, and reviews are accurate, complete, and ready for analysis. Addressing common challenges in web scraping allows teams to maintain high-quality data without wasting time on errors or gaps.

TagX helps businesses overcome these hurdles by providing clean, processed, and actionable data services. With structured ecommerce data, comprehensive collection services, and APIs for product, pricing, job, and market intelligence, TagX enables data-driven decision-making at scale. Teams can focus on growth and strategy while relying on data that is accurate, consistent, and ready to use.

For businesses looking to tackle these web scraping challenges effectively, contact TagX today to learn how our data services can support your goals and deliver reliable insights.

FAQs

1. What industries face the biggest web scraping challenges in 2026?

Industries like ecommerce, travel, finance, and real estate face the toughest web scraping challenges. These sectors rely heavily on competitor pricing, product availability, or market trends, and the websites often have complex layouts, frequent updates, or strict access restrictions.

2. How does TagX handle large-scale web scraping across markets?

TagX helps businesses collect data from multiple countries and platforms efficiently. Our data collection services account for differences in website layouts, languages, and formats. We standardize and clean the data so it is consistent across regions, ensuring businesses can rely on accurate insights for international pricing, inventory management, and market analysis.

3. Can manual monitoring replace automated web scraping?

Manual monitoring can help track small amounts of data, but it is slow, prone to errors, and difficult to scale. For businesses managing thousands of products or multiple websites, structured data services are more reliable and efficient than manual methods.

4. How often should companies update their web scraping workflows?

Companies should review and update their workflows regularly, ideally every few weeks or after major website updates. Frequent monitoring ensures extraction rules remain accurate, prevents data gaps, and maintains high-quality insights for business decisions.

5. What are the most common mistakes companies make when collecting website data?

Common mistakes include ignoring site layout changes, failing to standardize or clean data, underestimating delays in updates, and neglecting compliance rules. These errors can lead to inaccurate analysis, missed opportunities, and unreliable insights.